Know Your Keywords and Categories

Before you get started categorizing the terms that come to your site, you should know what keywords you are targeting, and the combinations of terms as well. I'm going to use a flower shop's website as an example for this particular blog post. Categorizing is something you can do with any website. At the very least, you can categorize terms into "Broad" and "Branded", to get you started.

Most keyword tools can help you establish what categories to target. Google's Keyword Tool or WordTracker are just a couple of the many tools available on the web.

Another way to figure out terms that fit in categories is by grabbing search data (referring terms in Google Analytics) on your site for the past few months or year. I personally spent some time going through and categorizing keywords in Excel by using the filters and then having the sheet show all words including "anniversary" for terms around "anniversary flowers". It takes a lot of work and time, but in the long run you will have a more accurate account of the terms you will need to do the Lookup against.

Setting Up Your Template

|

| Download the Template |

Now that you have all the terms possible in all of your categories it's time to start setting up your template. You are going to want to Download the template I have set up in Excel. You can start from a fresh Excel document if you want, but the template has directions (in case you lose this blog post somehow) and the Lookup formula is in there.

Once you have downloaded the template it's time to get it set up to work for your keywords.

In the following steps - I am going to walk you through setting up the template and then categorizing the terms. If you don't have terms that you can use already, I have a zip file you can download and walk through the example with me to get familiar with how this works.

Copy and paste your first set of categorized terms and paste them into the first Tab marked "Broad". Since every site usually has a "Broad" category of terms, I figure that's probably the best to get started with. In the case of this example "flower shop", "online flower shop", and "best flower shop" terms are the ones that fit under the Broad category.

If you have the .zip folder downloaded, open up the "Terms" Excel doc and you will see the words already categorized for you. There are "Broad", "Branded", "Birthday", "Anniversary", and "Wedding". Click the Drop Down next to "Category" and click "select all (to deselect all) and then click "Broad". You will see all of the terms sort by just that "Broad" category.

Next select all of the terms in the "Keyword" list - copy and paste them into the "Broad" Tab.

We will then need to sort the terms in alphabetical order so that the Lookup string can go through them in order. If you don't then the Lookup won't work.

Highlight the Column with your keywords

Click "data" > "sort"

Select "My data has headers"

Select under "sort by" the column you keywords are under (should be column A)

Click OK

Double click the Tab and rename it with the one word name of your category.

Highlight all of your keywords in the column (just the cells that have words, not any blank cells).

Type the name of the category (stick to one word naming) into the upper left field. You have now named your table.

Do this for "Branded" and the other categories as well. You are going to have to create a new tab in the template to fit all the categories.

If you have not downloaded the .zip file and are working off of your own terms, creating new tabs and naming them is probably going to be something you will need to do. But don't worry, the template will still work.

Now that you have all of your keywords in your Template's Tabs with names and sorted it's time to set up your Lookup string.

Setting up Your Lookup

The way the Lookup works in this case is we are going to ask Excel to look at one Keyword (one cell) and match it up to one of the terms in the Tabs we have set up. If it matches one of those terms then we tell Excel to place the word into that Cell. If it doesn't, then we just leave that cell blank.

The string looks like this:

=IF(ISNA(VLOOKUP(B2,Broad,Broad!A$2:Broad!A$999998,FALSE)),"","Broad")

- B2 is the cell of the keyword we want to look for.

- the first "Broad" is the Table name we want to look for that keyword in.

- Broad!A$2:Broad!A$9999998 is the Tab and range that the Table exists in.

- FALSE is telling the Lookup to do an exact match. TRUE would look through to see if letters from that Keyword exist in the Cells we are looking in, so in this case it won't work.

- We leave the ,"", as a blank - but you can put "not categorized" or "misc" to show that it isn't in a category. Though for our purposes here, we keep it blank.

- ,"Broad" is telling Excel to put the word "Broad" in the cell if the keyword matches one of those in the Broad Table or Tab.

See - it's that easy...

What you are going to do next is replace the word "Broad" or "Cat1" with the name of your table, Tab, and category. This is why we name the Table, the Tab, and the Category the same so that our life is much easier when setting this string up.

Now your template is ready for you to paste some keywords with data and grab some numbers.

Gathering Your Data

Open up your Google Analytics account - if you don't have Google Analytics, pretty much any tracking tool that has a list of referring terms with some sort of data is fine. You can expand and contract the columns to the right of the terms as you wish. The template you will download will have the columns set up just for the purpose of exporting referring terms with visits and such from Google Analytics though.

Open up your Google Analytics account - if you don't have Google Analytics, pretty much any tracking tool that has a list of referring terms with some sort of data is fine. You can expand and contract the columns to the right of the terms as you wish. The template you will download will have the columns set up just for the purpose of exporting referring terms with visits and such from Google Analytics though.Log into your Google Analytics account.

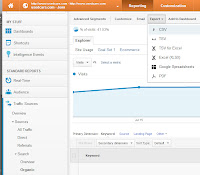

Click "Traffic Sources" > "Sources" > "Search" > "Organic"

Select the date range you would like to report on.

Scroll to the bottom of the report and show 5,000 rows.

Scroll back to the top and click "Export" the select "CSV".

After the file has downloaded, open the excel file.

Highlight JUST the cells that include the keywords and your data (ignore the first few at the top with date and information, and the bottom that summarize the data and below).

Copy those cells, and paste into your "Master" Tab.

Note: If you have multiple dates you would like to track, you can export the different date ranges, and then add which keywords go with what date in the Master Tab. This will allow you to see trends of categories.

I added an Excel doc called "Analytics Organic Search Traffic" with some terms and fake data that you can play with. There are three tabs that I added dates for each day's data. Start with just the one day and play with that to get familiar with percentages. From there you can play with all three dates and work on your trends to see what categories are trending up and down.

Completing Your Lookup

Now that you have copied and pasted the keywords into the "Master" Tab it's time to get all of those terms categorized.

Select the top row with your categories and your "All Categories" cell

Copy just those cells in the top row

Highlight the next row (same cells just below) hold down the "shift" key

Scroll down to the last keyword record

Holding down the shift key select the last cell under the "All categories" - this highlights all of those cells for those categories to Lookup the keywords.

Hit "CTRL+V" on your keyboard (this quickly pastes the Lookup formulas for each line)

Be patient, as it may take a while for your Lookup to complete (depending on how many keywords, and records you have)

The "Master" Tab should look something like this:

Playing With Your Data

The most efficient way to gather information from your data is to copy the entire "Master" Tab and paste as values into a new Excel sheet. This way you won't have to wait for the Lookup to complete each time you sort, pivot, etc.Click the top left "Arrow" in the "Master" Tab

Right Click and select "Copy"

Open a new Excel Doc

Right Click and select

From here you can create pivot tables then sort them into pie charts, graphs, and all sorts of fun reports to see how your keywords are performing.

I personally like to start with a quick pie chat to see what category of terms brings int he most traffic. At times we will have a drop or rise in traffic, and it's good to understand which category of terms are fluctuating. By copying and pasting terms by dates (weeks, months, or even a set of a few days) will help me see which categories are fluctuating on a timeline trend. Knowing which categories bring int he most traffic, I can then make decisions on which parts of the website we need to focus our efforts on to increase traffic.

See how much fun categorizing your terms can be?

Now that I have a template I work off of, when traffic goes up I can quickly categorize the terms and let our executives know if our recent efforts have worked.